The world’s most successful con man is not in finance or politics. He is the scientist that runs the world’s biggest machine. He has defrauded the US taxpayer of tens of billions of dollars, and he’s not done yet.

This is the story of particle physics and its kingpin, Carlo Rubbia.

A Field Forged in Fear

Particle physics is the study of matter and space. Newton and Einstein are the most famous scientists in this field. For centuries, physicists went about their business largely unnoticed by the public. Then came nuclear weapons.

History’s most famous equation was given to us by Einstein. E = mc2. To military planners, the equation is important because it says that matter can be converted to pure energy. Prior to World War II, chemical munitions only used a billionth of that explosive potential. The atom bomb showed that chemical munitions could start a nuclear reaction that achieved a million-fold improvement. A decade later, atom bombs were used to trigger fusion in a hydrogen bomb, achieving another factor of forty improvement.

Naturally, after World War II, politicians recognized that particle physicists were the most dangerous people in the world. A single hydrogen bomb can wipe out a city like London. Particle physicists were organized under the Department of Energy and told to find out whether even greater horrors were possible. That mission was sustained by the Cold War competition with the Soviet Union.

This work was done at particle colliders. Over time, these became the world’s largest machines, costing hundreds of millions of dollars to build and operate.

Fortunately for the survival of the human race, by the mid-eighties we knew that the hydrogen bomb was the limit. Everything discovered by the particle colliders was unstable, lasting at most a millionth of a second. However, this was bad for particle physicists. They needed a new marketing message to convince politicians to give them billions so they could keep on building and running colliders.

Given that the researchers were inspired by the prospect of blowing up the world, perhaps we should have expected what came next.

The Final Theory of Everything

Every politician knows that politics is a contest of wills. In the halls of Congress and in the White House, palpable energy is generated by these contests. Politicians know that spirituality is real.

Could that energy be tapped? Well, not according to physics. In fact, Einstein’s theories seemed to prove that spiritual energy couldn’t exist. Remove all the matter from space and there is nothing left.

Physicists knew better. Richard Feynman, the quirky theorist from Cal Tech, spoke about going to Princeton to speak before the “Monster Minds.”

This, then, was the pitch: “We know that our theories of matter and space are incomplete. Give us money so that we can find the final theory of everything. Then we’ll know how to harness the power of will.” Now, this was absurd from the start. Will is generated by the human mind, which needs to avoid explosions at all costs. But it worked for a while. Congress is a creature of habit, and it wasn’t too much money, at first. Only a couple of hundred million dollars a year.

Then, in the mid-eighties, came the supercolliders. These were billion-dollar machines. Finally, the international particle physics community banded together into coalitions. In Europe, researchers at CERN promoted an upgrade to their collider. In the US, states competed to host the Superconducting Super Collider. Not surprisingly, George Bush Sr. picked Texas as the winner.

As the price tag went up and up, the particle physics community realized that only one candidate could be built. And this is where the con started – the con that left the US giving billions of taxpayer dollars to CERN.

Nobels Oblige

Alfred Nobel was a Swedish chemist and arms merchant (alas, explosions again) who bequeathed his fortune to fund the Nobel Prize. Winning the Nobel Prize in any science is one of the few ways that a scientist gains public notoriety. With that stature comes access to politicians that funnel taxpayer dollars into research. Universities and laboratories, naturally, compete to hire Nobel Prize winners. When they can’t hire them, they try to create them.

Inevitably, the Nobel Prize is a highly political award. It’s not just the ideas that count.

The Nobel Prize for Physics is dominated by fundamental physics. Discovering a new particle or force is almost guaranteed to be followed by an invitation to Stockholm.

Motive: billions of taxpayer dollars for the next particle collider. Opportunity: given that politicians don’t understand a single thing about particle physics, winning a Nobel Prize establishes prestige that could determine the flow of those dollars. Means: the existing collider at CERN. Sounds like a recipe for crime.

Exposing the grift is difficult because particle physicists speak an arcane language. I will try keep it to a minimum, but to be able to confront the perpetrators of this crime against the American taxpayer, we need to understand some of that language.

As well as particles of matter called fermions, the universe contains fields. These fields come in packets called bosons. Bosons allow matter to interact. As a practical example, when you chew food, the atoms of your teeth are not mechanically breaking the food apart, but generating bosons called photons that break the food apart.

How do physicists prove that they have discovered a new fermion?

The concept is built upon Einstein’s equation. E = mc2. To achieve perfect conversion of mass to energy, physicists discovered that they could make antimatter that, when combined with normal matter, annihilates completely.

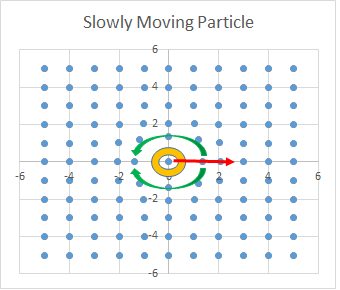

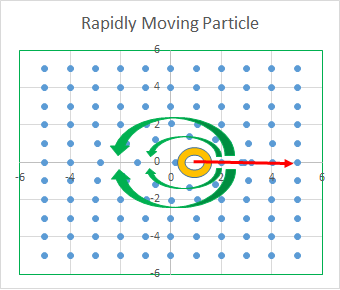

How to make new kinds of matter? In this regard, the most interesting bosons are the W and Z. Through these so-called weak interactions, any kind of matter can be created. The only requirement is that enough energy exists to run annihilation in reverse. This is called “pair creation.” From the pure energy of the Z, matter and antimatter are created.

To find a new kind of fermion, a collider first manufactures antimatter. It then takes the antimatter and matter, pushing them through voltage that adds energy of motion, creating beams. Finally, the beams are aimed to an intersection point at the center of a detector. Randomly, annihilation occurs. Both the energy of mass and the energy of motion are available to create new fermions.

The process is rote. Build a collider. Use the acceleration to control the energy of the collisions. Analyze the data coming out of your detectors. When you get to the power limit of your collider, go to Congress and ask for more money.

The challenge is that sometimes beams collide without producing anything interesting, filling your detectors up with noise. Fortunately, there is a specific signal that occurs most frequently when creating a new kind of fermion. The detectors will see two photons moving in opposite directions.

Remember that last fact. When a new kind of fermion is found, we see two photons moving in opposite directions.

From the start of particle physics until 1987, eight fermions were discovered. They first six showed a definite generational pattern: a light lepton followed by two heavier quarks. The first triad is known as electron, down, and up. The second generation contains muon, strange, and charm. In the third generation, colliders had detected the tau and bottom. The field was racing to find the third member of that generation, the top.

Along the way, there was another important discovery. The weak interactions are weak because the W and Z themselves have large masses. On the way to finding the top, the bosons were confirmed, at energies of 80 and 93 GeV. (The units are not important. Remember the numbers.) For purposes of understanding the fraud, I emphasize that the W and Z do not produce two photon signals.

The W and Z results confirmed theoretical predictions, convincing politicians that the field was on a solid footing. For this, Carlo Rubbia was awarded the Nobel Prize in 1984.

I was in my last year of my graduate studies in 1987 when CERN announced the discovery of the top, publishing its claim in Physical Review. One of my thesis advisors, Mary Kay Gaillard, had come to UC Berkeley through CERN. That connection brought researchers from CERN who described the result. I was shocked to hear that the data did not demonstrate the required two-photon signal. Furthermore, the accelerator energy during the study was 346 GeV, exactly twice the sum of the W and Z masses.

I trusted Mary Kay. In my presence, she denounced the evils of nuclear weapons. I went to her and voiced my confusion. How could this be a new particle? It looked like a collection of four weak bosons, exactly at the energy that you would predict.

Her answer amounted to, “Go home little boy. The adults are playing politics.”

As the leader of CERN, winner of the Nobel prize, and lead author of the top paper, Carlo Rubbia was the kingpin of particle physics. And CERN won the competition for the next collider.

Higgsy Pigsy

Let’s return to the political context now. Remember: the Cold War was ending. Everyone knew that no new bomb technology was coming out of particle physics. The goal was now a theory of everything. How long would that political motivation last?

Given the abstractness of the motivation, the field needed a long runway in its next accelerator. This was part of the strategy with the top announcement. The heaviest particle to that point was the bottom quark, at 4.2 GeV. That the fraudulent “top” was all the way up at 173 GeV suggested that there was much more to come, if only politicians would fund the work.

The proposed upgrade of CERN was not modest. It set a 20-year goal of attaining a sixty-fold increase in the collider’s power. Bedazzled by Nobel prizes and the pretty pictures produced by taxpayer-funded science propagandists, the politicians were persuaded to comply.

Then came turn-on date in 2012. The machine was ramped up through its energy range, scanning for new particles all the way up to its limit.

Nothing. Zero, Zilch. A ten-billion-dollar boondoggle, funded in no small part by the American taxpayer.

Except then, after a summer spent scanning higher energies, the machine was turned down to 125 GeV. Be clear: this was an energy accessible by the earlier collider. At that energy, the detectors showed a two-photon signal. Detecting this signal is a primary design criterion for every detector. As it occurred at lower energy than the signal announced as the “top,” it must have been known before that study.

Demonstrating their impenetrability to shame, the 125 GeV signal was published in Physical Review and announced as the long-sought after “Higgs particle.”

“Really,” I though, “you are going to double down on your fraud?”

Remember: two photons is the signal for a new particle. The “Higgs” is what the top should have looked like. In fact, by the standards of the field, I should be awarded the Nobel prize for recognizing that it is the top.

None-the-less, the shameless perpetrators began their pressure campaign. They leaned on the Nobel committee to recognize Peter Higgs, the developer of the field’s minimally coherent theory of particle mass. In the background, Marco Rubbia, CERN’s prior laureate, went to the funding panels, demanding, “You know, this Higgs is kind of weird. We need more money for another collider.” The Nobel committee, having acceded to the Higgs award, heard of this and protested, “We are about to award the Nobel Prize for this discovery. Is it the Higgs or is it not the Higgs?” Rubbia backtracked.

Only temporarily, however. Read the popular science press and every week you will see a propaganda piece promoting the next collider at CERN. After all, the full-time job of their taxpayer-funded propagandists is to secure funding for that collider.

Omerta

The question, in any massive conspiracy, is how the community maintains discipline. This is a matter of leverage.

You see, university posts in particle physics are not funded directly. They are funded as an adder on collider construction and operation budgets.

For twenty years, I have been trying to get particle physics out of the rut of superstring theory – a theory that is certifiably insane for its violations of everything that we observe about the universe. In the one instance that I was able to get into dialog with a theorist, I was told “I know that you are right, but if I work with you, I will lose my funding.”

CERN is the only game in town. Anything that does not build to more construction is not funded. Pure and simple, Rubbia is the godfather of particle physics. If you don’t play, he won’t pay.

It is time to stop the grift. The next machine will cost the US taxpayer tens of billions of dollars. Enough is enough. Call your local congresspeople and demand that they investigate and shut this down. We have more pressing problems to worry about.